Pentaho Data Integration (Kettle): Transforming Data Workflows in the Modern Enterprise

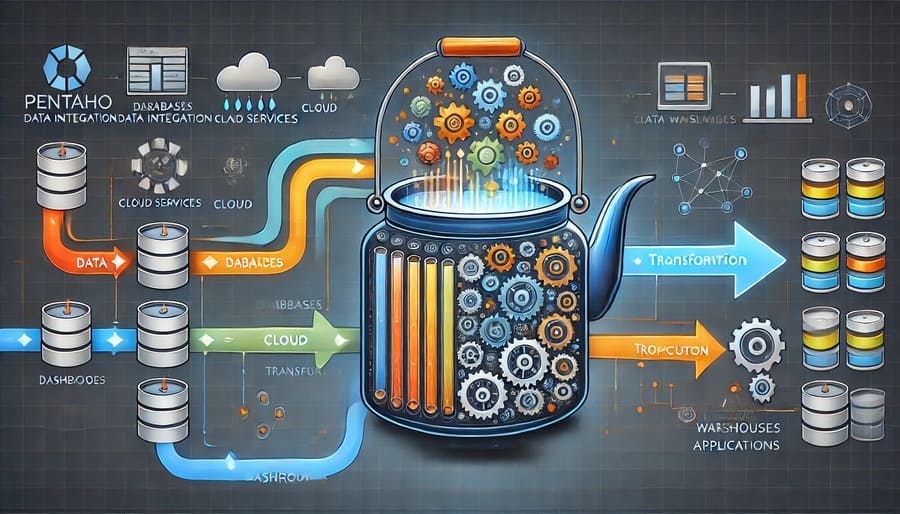

In today’s data-driven business landscape, organizations need robust tools to extract, transform, and load (ETL) data efficiently. Pentaho Data Integration, also known as Kettle, stands out as a powerful open-source solution that has transformed how businesses handle data integration challenges. This comprehensive guide explores Kettle’s capabilities, benefits, and practical applications to help data engineers and analysts leverage its full potential.

Pentaho Data Integration is a component of the larger Pentaho suite, providing open-source ETL capabilities that enable users to extract data from diverse sources, transform it according to business rules, and load it into target destinations. Originally developed as an independent project called Kettle (Kettle E.T.T.L. Environment), it was later acquired by Pentaho and has since evolved into a mature, enterprise-ready data integration platform.

The name “Kettle” serves as a clever acronym for “Keep the Extraction, Transportation, Transformation, and Loading Easy,” reflecting its core design philosophy of simplicity and accessibility.

One of Kettle’s most compelling features is its intuitive graphical interface, known as Spoon. This drag-and-drop environment allows data engineers to design complex ETL processes without extensive coding. The visual workflow representation makes it easier to:

- Design and debug transformations and jobs

- Visualize data flows between steps

- Monitor execution statistics in real-time

- Document processes through the visual representation itself

Kettle excels in connecting to diverse data sources through its extensive library of pre-built connectors, including:

- Relational databases (MySQL, PostgreSQL, Oracle, SQL Server, etc.)

- NoSQL databases (MongoDB, Cassandra)

- Cloud platforms (AWS, Azure, Google Cloud)

- Big data ecosystems (Hadoop, Hive, HBase)

- File-based storage (CSV, Excel, XML, JSON)

- APIs and web services

Beyond simple data movement, Kettle provides robust transformation capabilities:

- Data cleansing and validation

- Lookups and joins across multiple sources

- Aggregation and filtering

- Calculated fields and business rules implementation

- Advanced scripting through JavaScript, Python, and R integration

- Error handling and logging

Pentaho Data Integration accommodates growing data volumes through:

- Parallel processing capabilities

- Clustering support for distributed execution

- In-memory processing for performance-critical operations

- Native integration with big data technologies

- Incremental loading options for efficient processing

Kettle serves as an ideal tool for building and maintaining data warehouses, handling:

- Initial data loading from source systems

- Incremental refreshes and change data capture

- Slowly changing dimension management

- Fact table loading and aggregation

- Real-time or scheduled data updates

For organizations focused on analytics, Kettle streamlines:

- Dimensional modeling implementation

- Star and snowflake schema creation

- KPI calculation and metric standardization

- Data quality enforcement

- Self-service data preparation

When organizations undergo system changes, Kettle facilitates:

- Legacy system data migration

- Application integration through data synchronization

- Master data management processes

- System consolidation during mergers and acquisitions

- Cloud migration support

Getting started with Kettle is straightforward:

- Download the Community Edition from the Hitachi Vantara website

- Ensure a compatible Java Runtime Environment is installed

- Extract the downloaded files to your preferred location

- Launch the Spoon interface by running spoon.bat (Windows) or spoon.sh (Linux/Mac)

A basic transformation follows these steps:

- Define input sources through appropriate steps (Table Input, CSV Input, etc.)

- Add transformation steps for data manipulation (Filter, Join, Calculator, etc.)

- Configure output destinations (Table Output, Text File Output, etc.)

- Connect steps with hops to establish the data flow

- Run and monitor the transformation

Experienced Kettle users recommend:

- Breaking complex processes into manageable transformations and jobs

- Using parameters for dynamic configuration

- Implementing reusable components through metadata injection

- Building comprehensive logging for troubleshooting

- Establishing naming conventions for consistent development

As an open-source solution, Kettle offers significant advantages:

- Zero licensing costs for the Community Edition

- Reduced total cost of ownership

- Pay-only-for-support options with Enterprise Edition

- No per-user or per-core pricing complexity

- Freedom from vendor lock-in

The vibrant Kettle community provides:

- Active forums for troubleshooting and knowledge sharing

- Extensive documentation and tutorials

- Community-contributed plugins and extensions

- Regular updates and improvements

- Diverse use cases across industries

Kettle’s open architecture enables:

- Custom plugin development for specialized needs

- Integration with existing technology stacks

- Adaptation to unique business requirements

- Contribution back to the open-source community

- Internal expertise development without vendor dependence

While powerful, Kettle presents certain challenges:

- Enterprise support requires commercial subscription

- Performance tuning may be necessary for very large datasets

- Advanced features may require technical expertise

- Governance and security need careful configuration

- Community versions may lag behind enterprise releases

The Pentaho ecosystem continues to evolve with:

- Enhanced cloud native capabilities

- Improved big data integration

- Advanced machine learning preprocessing

- Real-time streaming data support

- Deeper DevOps and DataOps integration

Pentaho Data Integration (Kettle) remains one of the most capable open-source ETL tools available, offering a compelling combination of accessibility, functionality, and cost-effectiveness. For organizations seeking to streamline their data workflows without significant investment in proprietary solutions, Kettle provides a mature, battle-tested platform that can handle enterprise-scale challenges while remaining accessible to teams of all sizes.

Whether you’re building a data warehouse, migrating systems, or enabling business intelligence, Pentaho Data Integration offers the flexibility and power to transform your data integration processes into efficient, reliable workflows that deliver business value.

#PentahoDataIntegration #Kettle #ETLTools #DataEngineering #OpenSourceETL #DataIntegration #DataTransformation #BusinessIntelligence #DataWarehouse #DataMigration #BigData #HitachiVantara #DataPipelines #DataWorkflows #ETLProcesses